I wrote this on Twitter the other day and it got a bit more traction than I expected. The responses told me I'd hit a nerve. This tension between speed-to-market and building something people actually want to use is real, and most founders are navigating it without a clear map.

Here's where I stand.

I see a lot of weekend launches that feel hollow. Landing pages with a Stripe button and barely any functionality. AI-generated copy, a Tailwind template, and a prayer that someone will hand over their credit card.

I won't sign up for these things. I definitely won't give them my payment details.

There's no trust signal, no reason to believe this thing will exist in three months, and frankly, it often feels like the builder is testing whether they can launch fast, not whether they're solving a real problem.

But I also overbuild. I take longer than I probably should to ship things. Every time I start a new project, I tell myself I'll keep it simple and launch quickly. Then I add another feature, refine the UI one more time, write better error messages, and before I know it, weeks have turned into months.

I struggle to ship something that feels unfinished. So I'm caught in this paradox, watching people launch scrappy MVPs while I'm still polishing details that probably only I notice.

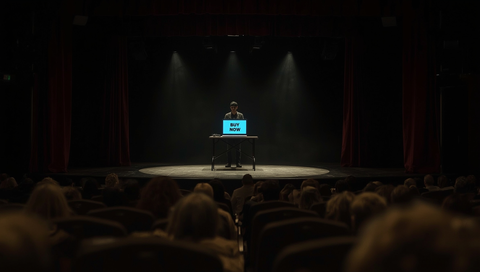

The Weekend Launch Theatre

The internet is full of "I built this in a weekend with AI" posts. Some of them are impressive. Most of them are landing pages pretending to be products.

There's a cargo cult forming around the idea of shipping fast, where people confuse activity with progress. They think launching equals validating, but they're often just testing whether they can slap together a website quickly.

When you ask people to pay for something that barely exists, you're essentially asking them to bet on your ability to deliver something you haven't built yet, with no evidence that you'll follow through or that the thing will actually work when you do.

This isn't a new problem: bad MVPs have been around as long as the concept itself.

The real issue is that speed has become the only metric that matters, and when velocity is your north star, you stop optimizing for the right outcomes. You're measuring your ability to build quickly instead of whether customers want your solution. Those are very different experiments, and only one of them actually matters.

What I'm seeing often looks more like minimum buildable products than minimum viable ones.

The question seems to be "what's the least we can ship so we can say we shipped something?" rather than "what's the least we need to validate this idea?" That might scratch an itch for productivity theater, but it doesn't tell you anything useful about whether you're building something people actually want.

When Polish Actually Matters

Here's what I've learned from shipping actual products.

Context is everything.

How polished something needs to be depends entirely on your audience and your space. With AutoChangelog, I'm building for developers, designers, and product teams. People like me.

If I launch with a janky interface and broken edge cases, they'll dismiss it immediately. The bar for credibility is higher when you're selling to people who build products for a living. They can smell a weekend project from a mile away.

Some markets demand trust before transaction. Fintech needs polish. Developer tools need polish. B2B products need polish. If you're asking someone to integrate your API into their production systems or trust you with their financial data, you better not have placeholder copy and broken loading states.

When expectations are high, rough edges become deal-breakers.

My newsletter sites like DailyPhotoTips and TheDailyPreset are essentially single-page websites with an email form.

That's the right amount of polish for that play. Nobody cares if the landing page has subtle animations or a perfect mobile experience as long as the copy is clear and the form works.

But that's because I'm not asking for trust in the interface. I'm asking for trust in my ability to deliver good content, and I can prove that with samples.

The concept of a Minimum Lovable Product exists specifically to address this. The goal is building something people love from the start, even if it only does one thing, rather than something they merely tolerate. The difference between viable and lovable is often the difference between someone trying your product once and someone telling their friends about it.

That gap matters more in crowded markets where your users have plenty of alternatives.

The mistake people make is applying one-size-fits-all "ship fast" advice without considering who they're building for. A scrappy MVP might work great if you're testing demand for a new market category or building something for early adopters who are used to rough edges.

It falls flat when you're the 47th project management tool launching this month and your users have already used Asana, Linear, and Notion. In that competitive landscape, users will just close the tab and move on to something that feels more finished.

The Case for Ugly Speed

Let me make the strongest argument I can for the opposite view because it has merit.

There's genuine value in getting something into users' hands quickly. Market research is valuable, customer feedback is valuable, and the risk of building in silence for months is that you might build the wrong thing for too long.

I've seen this happen. Smart founders who spent six months perfecting a product only to launch and discover that nobody actually wanted what they built.

Sometimes ugly wins because it validates demand faster. If you can throw together a landing page, drive some traffic to it, and see if people actually click the "get early access" button, you've learned something without writing a single line of product code.

That's useful information. You can kill a bad idea in a weekend instead of wasting months building it.

I know founders who built for months in silence before launching. They had deep conviction about the problem they were solving, they knew their market, and they trusted their instincts and they were right. They launched polished products that found product-market fit quickly because they understood their customers well enough to build the right thing from the start.

That approach works when you have genuine insight, not when you're guessing.

The hidden cost of polish is opportunity cost. Every hour you spend perfecting the onboarding flow is an hour you're not spending talking to customers or testing your assumptions.

Every day you delay launch is another day you're operating on guesses instead of data. Perfectionism is a trap that kills more startups than shipping too early. I know this intellectually, even if I struggle with it personally.

But here's where it gets tricky. Fast is only good if you're validating the right thing. If you ship a landing page and get 1,000 email signups, what have you actually learned? You've learned that people liked your headline. You haven't learned if they'll use your product. You definitely haven't learned if they'll pay for it.

Speed only accelerates your learning if you're measuring the right signals.

What "Viable" Actually Means

Let's come back to my original tweet.

A real MVP proves someone cares enough to use it more than once and actually pay for it. That's the bar. Not signups, not waitlist subscriptions, and not social media engagement. Paying customers who come back.

For me, somewhere between 10 and 25 paying customers is proof of early traction. That's enough signal that you're onto something. One customer might be a favor. Five customers might be luck. But 10 to 25 people who found your product, decided it was worth paying for, and stuck around long enough to use it multiple times? That's validation. That's viable.

The core issue is understanding what you need to prove.

Every product launch is an experiment, but you need to be clear about what you're testing. Are you testing whether people understand the problem you're solving? Are you testing whether they'll pay for a solution? Are you testing whether your specific solution is better than alternatives?

Each of these questions requires a different level of fidelity in your product.

Here's a framework I use

Before you launch anything, answer these three questions:

- Who is this for, specifically? Not "small businesses" or "busy professionals." Actual people with actual problems you can describe in detail.

- What's the minimum level of execution they'll trust? A teenager launching a social app can get away with rough edges. A company selling security software cannot.

- What behaviour am I trying to validate? Be specific. "Will people sign up" is not the same as "will people integrate this into their workflow" which is not the same as "will people pay monthly for this."

If you can't answer these questions clearly, you're not ready to launch anything, whether it's an MVP or a polished v1. The problem isn't your execution speed. The problem is you don't know what you're building yet.

But, how do you know when to use each approach? (MVP vs. MLP)

| Factor | Build an MVP | Build an MLP |

|---|---|---|

| Market Maturity | New category, few alternatives | Established space, many competitors |

| User Expectations | Early adopters, high tolerance for rough edges | Mainstream users, comparison shopping |

| Primary Goal | Test demand for the solution | Test demand for YOUR solution |

| Validation Metric | Will they try it? | Will they love it and stay? |

| Timeline | Days to weeks | Weeks to months |

| Risk | Building the wrong thing | Taking too long, missing window |

Stop Asking the Wrong Question

Here's the real answer. Both paths work if you're clear about what you're trying to learn. The trap is confusing motion with progress on either end. Shipping fast doesn't matter if you're not measuring the right things. Building for months doesn't matter if you're building the wrong things.

When I look at my own history, I see a pattern. Contrastly launched with thoughtful features and grew from there. The products we built at work took time to get right before launching. AutoChangelog is taking longer because the audience expects quality. My newsletter sites launched quickly because they needed less.

These weren't accidents or mistakes. They were decisions based on what each product needed to prove and who it was for.

Maybe the whole framing is broken. Instead of debating MVP versus polished launches, we should be asking what's the minimum credible version for this specific audience and validation goal.

Sometimes that's a landing page and a quick form. Sometimes that's a fully functional product with thoughtful UX. Both can be right. Neither is universally correct.

The only wrong move is shipping something you don't believe in because Twitter told you to ship fast, or holding back something that's ready because you're scared of criticism.

Build what you'd actually want to use yourself, ship it to people who have the problem you're solving, and measure whether they care enough to come back and pay.

Everything else is noise.

The goal is figuring out if you're solving a problem that matters to people who will pay for the solution. The faster you can answer that question with real evidence, the better. How you get there is up to you.